Policy or Value ? Loss Function and Playing Strength in AlphaZero-like Self-play

Por um escritor misterioso

Last updated 08 maio 2024

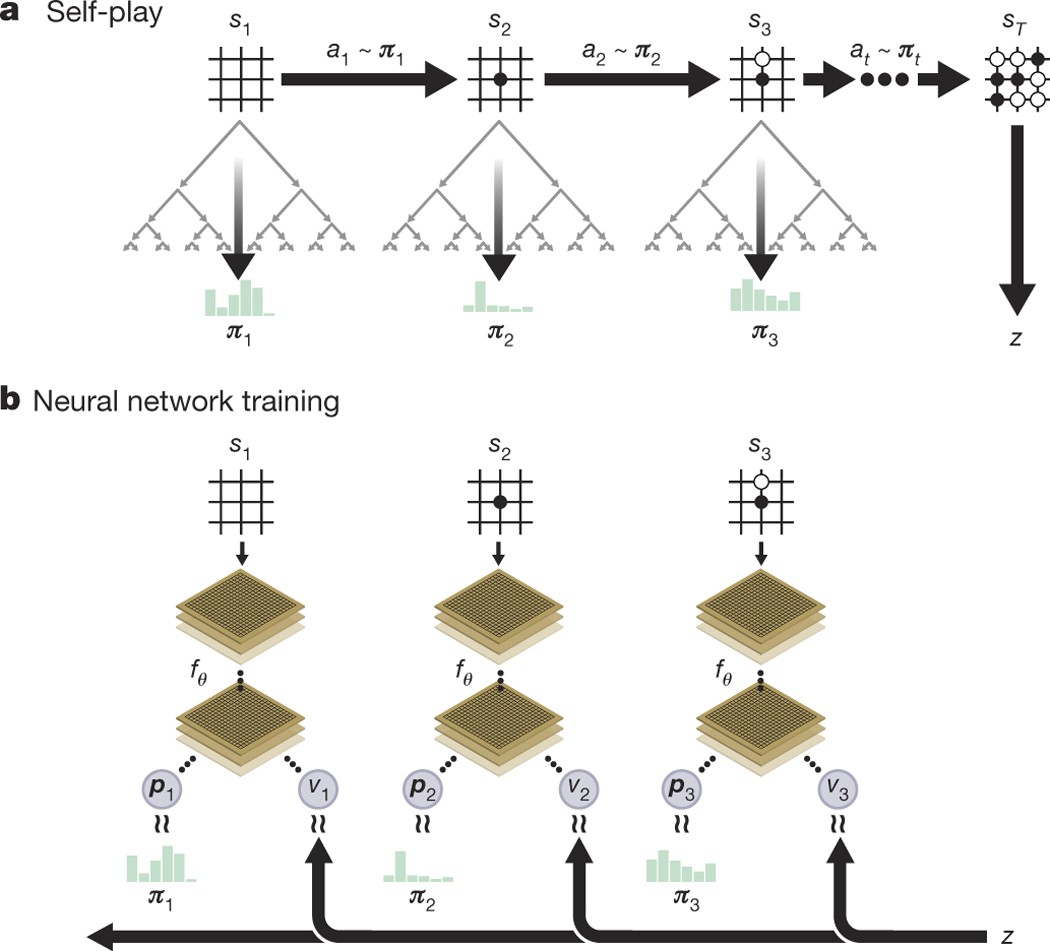

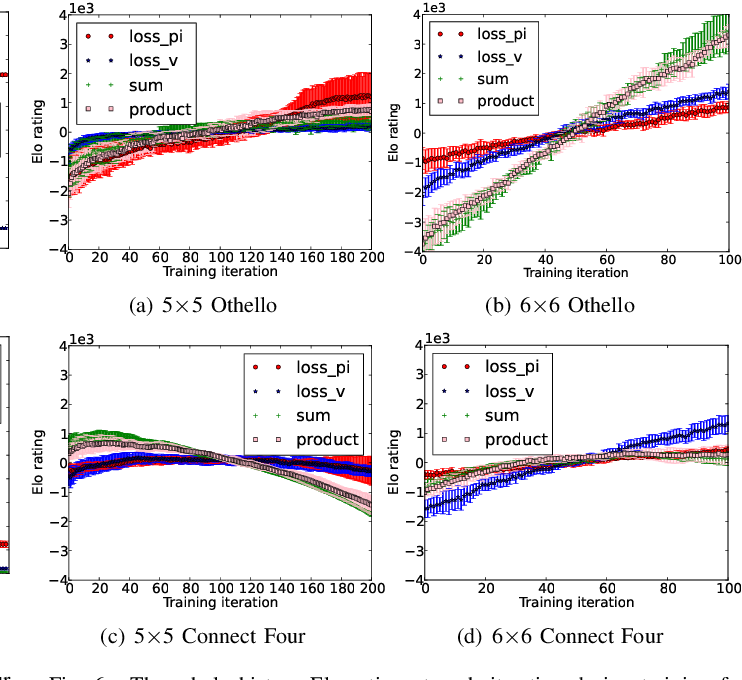

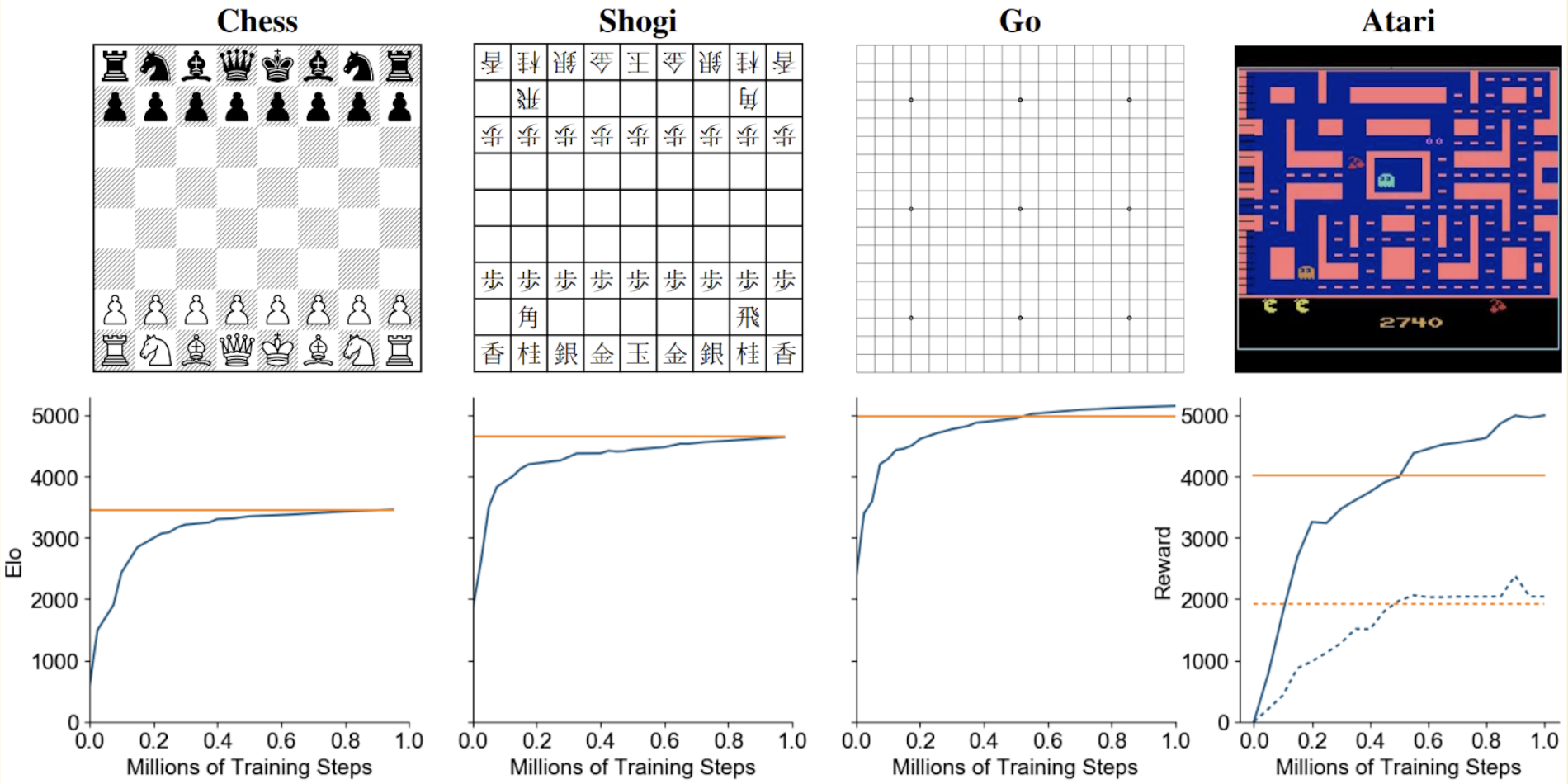

Results indicate that, at least for relatively simple games such as 6x6 Othello and Connect Four, optimizing the sum, as AlphaZero does, performs consistently worse than other objectives, in particular by optimizing only the value loss. Recently, AlphaZero has achieved outstanding performance in playing Go, Chess, and Shogi. Players in AlphaZero consist of a combination of Monte Carlo Tree Search and a Deep Q-network, that is trained using self-play. The unified Deep Q-network has a policy-head and a value-head. In AlphaZero, during training, the optimization minimizes the sum of the policy loss and the value loss. However, it is not clear if and under which circumstances other formulations of the objective function are better. Therefore, in this paper, we perform experiments with combinations of these two optimization targets. Self-play is a computationally intensive method. By using small games, we are able to perform multiple test cases. We use a light-weight open source reimplementation of AlphaZero on two different games. We investigate optimizing the two targets independently, and also try different combinations (sum and product). Our results indicate that, at least for relatively simple games such as 6x6 Othello and Connect Four, optimizing the sum, as AlphaZero does, performs consistently worse than other objectives, in particular by optimizing only the value loss. Moreover, we find that care must be taken in computing the playing strength. Tournament Elo ratings differ from training Elo ratings—training Elo ratings, though cheap to compute and frequently reported, can be misleading and may lead to bias. It is currently not clear how these results transfer to more complex games and if there is a phase transition between our setting and the AlphaZero application to Go where the sum is seemingly the better choice.

Why Artificial Intelligence Like AlphaZero Has Trouble With the

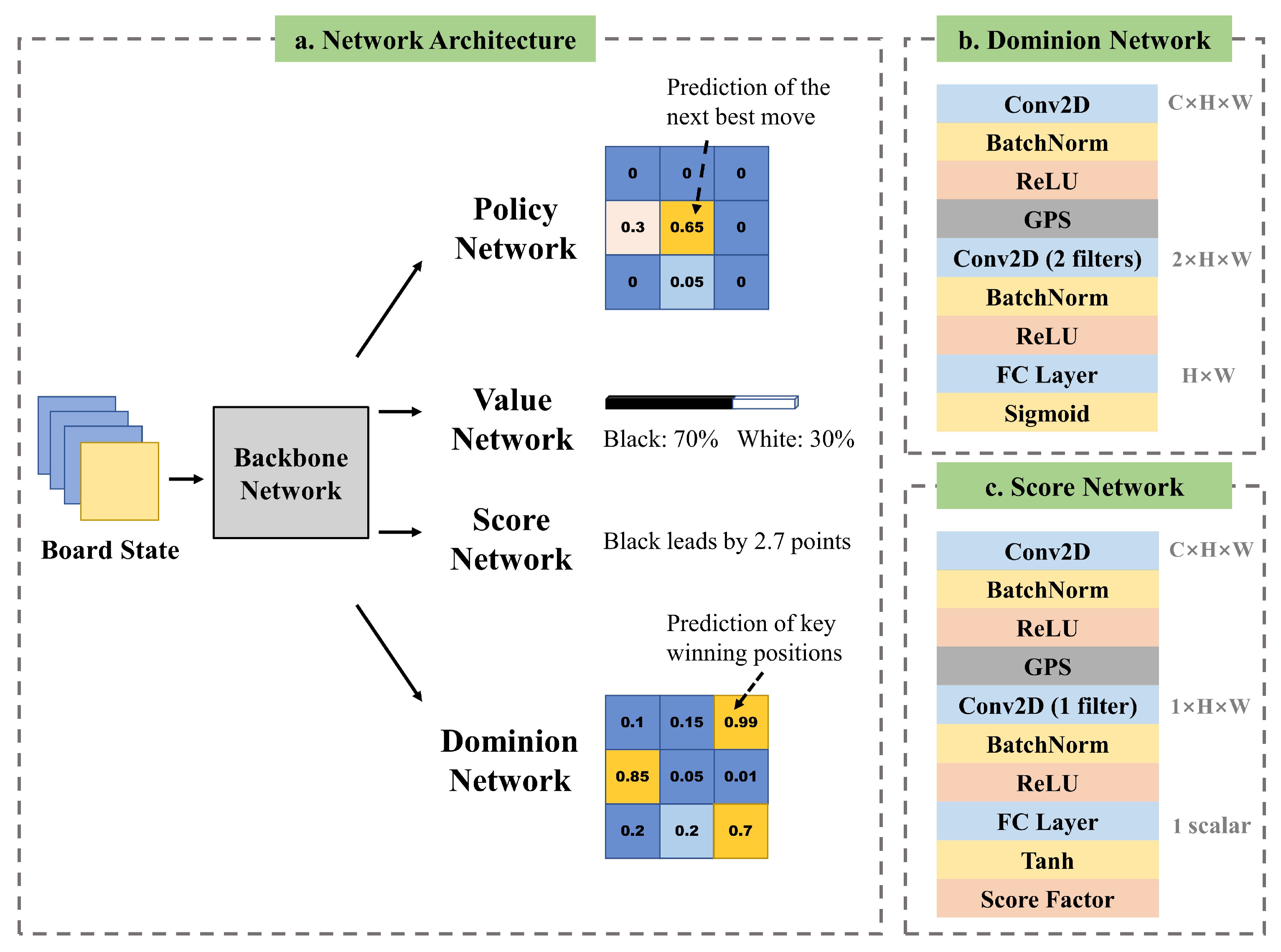

Electronics, Free Full-Text

Electronics, Free Full-Text

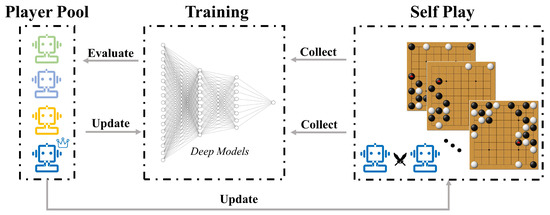

How to build your own AlphaZero AI using Python and Keras

The future is here – AlphaZero learns chess

Mastering the game of Go without human knowledge

Policy or Value ? Loss Function and Playing Strength in AlphaZero

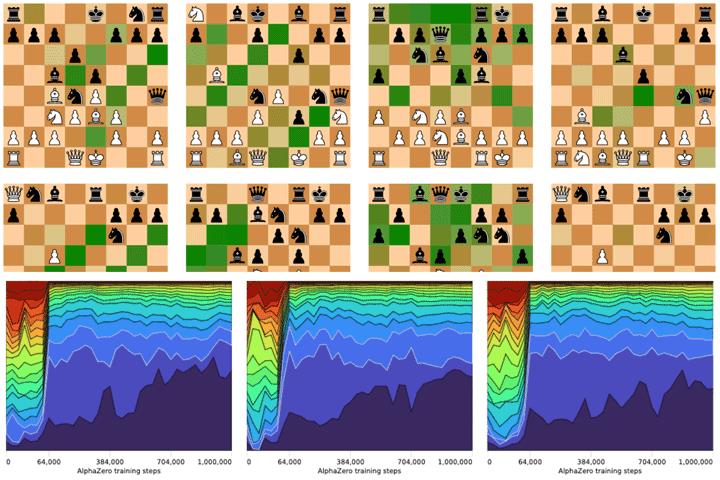

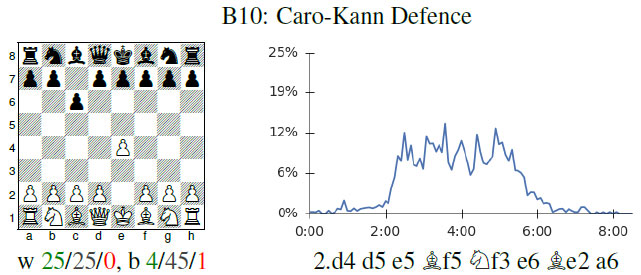

Acquisition of Chess Knowledge in AlphaZero – arXiv Vanity

Simple Alpha Zero

AlphaZero

Reimagining Chess with AlphaZero, February 2022

Representation Matters: The Game of Chess Poses a Challenge to

Recomendado para você

-

Acquisition of Chess Knowledge in AlphaZero08 maio 2024

-

The future is here – AlphaZero learns chess08 maio 2024

The future is here – AlphaZero learns chess08 maio 2024 -

Chess's New Best Player Is A Fearless, Swashbuckling Algorithm08 maio 2024

Chess's New Best Player Is A Fearless, Swashbuckling Algorithm08 maio 2024 -

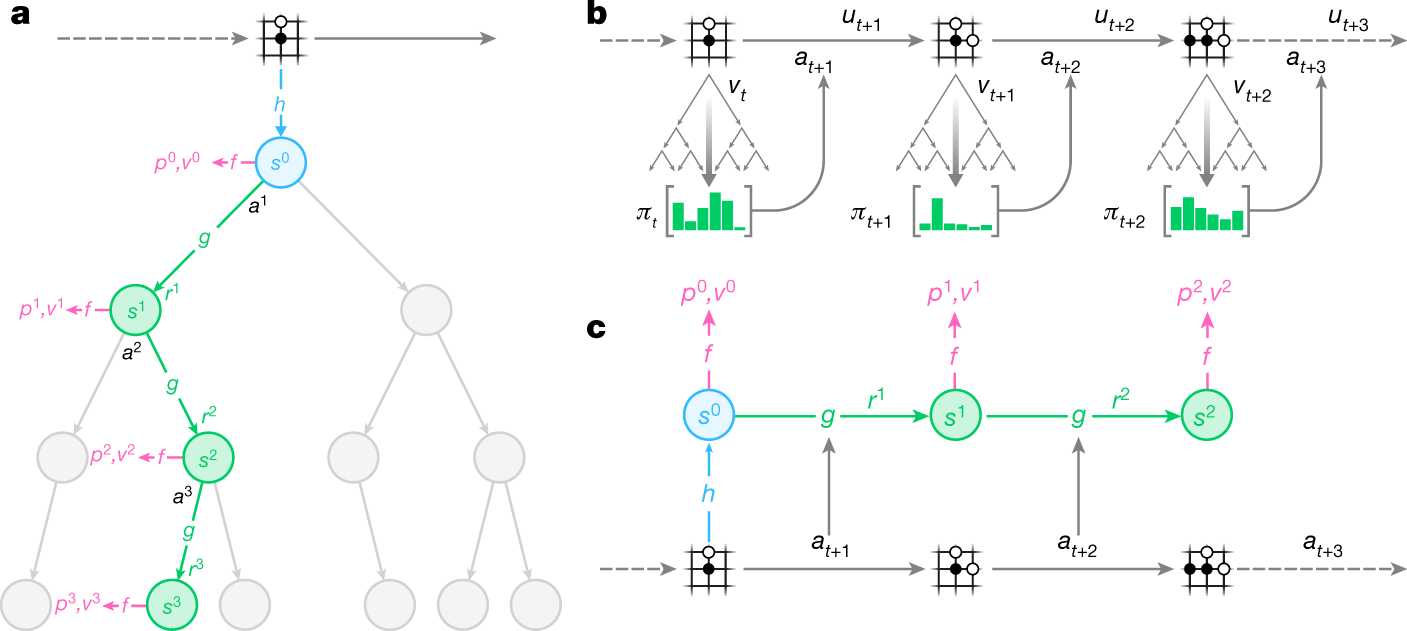

Mastering Atari, Go, chess and shogi by planning with a learned model08 maio 2024

Mastering Atari, Go, chess and shogi by planning with a learned model08 maio 2024 -

The Data Problem III: Machine Learning Without Data - Synthesis AI08 maio 2024

The Data Problem III: Machine Learning Without Data - Synthesis AI08 maio 2024 -

Chess for All Ages: AlphaZero Match Conditions08 maio 2024

Chess for All Ages: AlphaZero Match Conditions08 maio 2024 -

DeepMind's AlphaGo Zero and AlphaZero08 maio 2024

DeepMind's AlphaGo Zero and AlphaZero08 maio 2024 -

) CHESS#127808 maio 2024

CHESS#127808 maio 2024 -

Understanding AlphaZero Neural Network's SuperHuman Chess Ability - MarkTechPost08 maio 2024

Understanding AlphaZero Neural Network's SuperHuman Chess Ability - MarkTechPost08 maio 2024 -

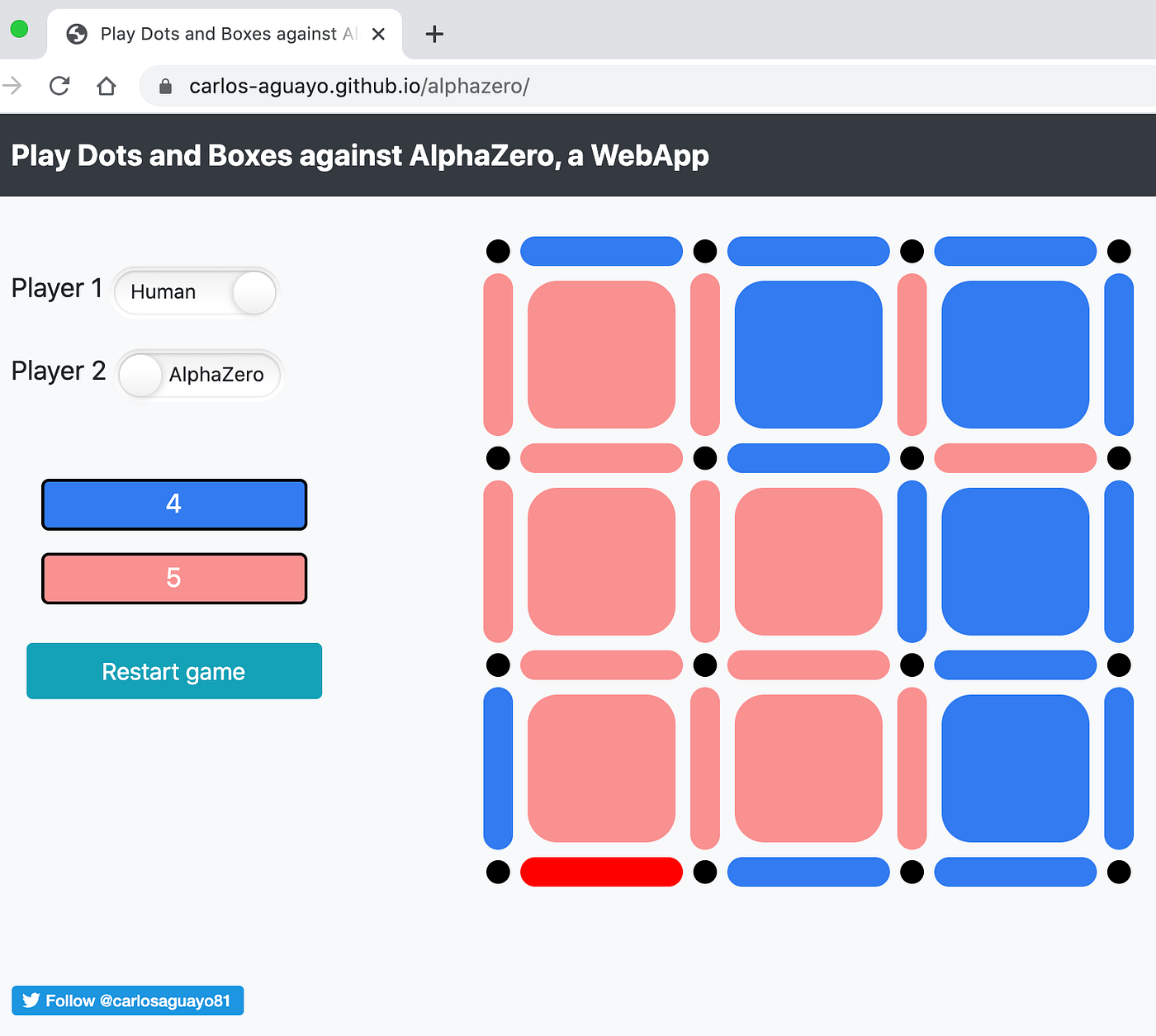

AlphaZero, a novel Reinforcement Learning Algorithm, in JavaScript, by Carlos Aguayo08 maio 2024

AlphaZero, a novel Reinforcement Learning Algorithm, in JavaScript, by Carlos Aguayo08 maio 2024

você pode gostar

-

Lampyridaes on X: Making a #doorsroblox au where the entities serve as hotel staff members! Here's our first hotel worker, Narrator, who's based off the death messages. His in-depth knowledge of his08 maio 2024

Lampyridaes on X: Making a #doorsroblox au where the entities serve as hotel staff members! Here's our first hotel worker, Narrator, who's based off the death messages. His in-depth knowledge of his08 maio 2024 -

CANCHA DE MIDLAND08 maio 2024

CANCHA DE MIDLAND08 maio 2024 -

boruto vs kawaii episode|TikTok Search08 maio 2024

boruto vs kawaii episode|TikTok Search08 maio 2024 -

Raphinha é expulso, e Barcelona fica no empate com o Getafe08 maio 2024

Raphinha é expulso, e Barcelona fica no empate com o Getafe08 maio 2024 -

Estab-Life: Great Escape Online - Assistir todos os episódios completo08 maio 2024

Estab-Life: Great Escape Online - Assistir todos os episódios completo08 maio 2024 -

Decathlon - Av. Mackenzie, 181608 maio 2024

Decathlon - Av. Mackenzie, 181608 maio 2024 -

Slow and dumb as always : r/Konosuba08 maio 2024

Slow and dumb as always : r/Konosuba08 maio 2024 -

C Programming Online Test Pre-hire Assessment by Xobin08 maio 2024

C Programming Online Test Pre-hire Assessment by Xobin08 maio 2024 -

Fly Race Script - Auto Rebirth, Auto Buy Eggs & More (2023)08 maio 2024

Fly Race Script - Auto Rebirth, Auto Buy Eggs & More (2023)08 maio 2024 -

Luffy One Piece Digital Download Anime Svg Anime Print08 maio 2024

Luffy One Piece Digital Download Anime Svg Anime Print08 maio 2024